ChatGPT vs Claude vs Gemini: The Definitive 2026 Comparison

We tested GPT-5.4, Claude Sonnet 4.6, and Gemini 3.1 Pro across coding, writing, and reasoning. Here's where each one actually wins.

For the past three months, I've been running every meaningful work task through all three of the major AI assistants — ChatGPT, Claude, and Gemini — and keeping notes on where each one wins, where it disappoints, and which one I'd reach for in different situations.

The honest answer is that there is no single best AI in 2026. But there absolutely are better and worse choices depending on what you're trying to do. Here's what I've learned.

The Short Version

Reach for Claude when the quality of writing or reasoning matters and you have time to get the best output. Reach for ChatGPT when you need something done quickly, want the most versatile tool, or are working on code. Reach for Gemini when you need current information or you live inside Google Workspace.

If you want the full breakdown, read on.

Writing Quality: Where the Gap Is Bigger Than People Realise

Writing is where Claude most clearly separates itself from the competition. Not on short tasks — a single paragraph or a few bullet points will come out similarly from all three. But on anything longer than about 300 words, the quality difference becomes real.

Claude's outputs read more naturally. Sentences vary. The logic flows. It doesn't default to the slightly stilted, over-qualified writing that plagues most AI-generated content. When I ask Claude to write in a specific style or voice, it honours that constraint more reliably than the others.

ChatGPT (GPT-4o) produces clean, competent writing that reads slightly more like polished template content. It's excellent for structured outputs — listicles, how-to guides, step-by-step instructions — where the format itself carries a lot of the work. The issue is that it can feel formulaic on tasks that require genuine stylistic judgement.

Gemini's writing is functional but noticeably behind the other two on nuance. It's improved significantly across versions, but for anything where the quality of the prose matters — a piece representing your brand, a sensitive email, a persuasive proposal — I'd still reach for Claude or ChatGPT first.

Winner: Claude — especially on longer, more nuanced writing tasks.

Reasoning and Analysis: Closer Than You'd Think

For complex analysis — working through multi-step problems, evaluating trade-offs, structuring an argument — GPT-4o and Claude are genuinely comparable and both excellent. The gap here is not as large as the writing gap.

Where I've noticed Claude pulling ahead is on tasks that require careful, sustained reasoning over a long context. Ask it to analyse a long document and identify internal contradictions, or to evaluate a complex argument and find the weakest assumptions — Claude tends to do this more carefully than GPT-4o, which can sometimes rush to a conclusion.

Gemini's reasoning has improved substantially with the 2.x models and is now competitive for most everyday analytical tasks. It handles mathematical problems particularly well, which reflects Google's research investment in that area.

One important caveat: all three models can produce confident-sounding reasoning that's wrong. For high-stakes analysis — legal, financial, medical — treat AI output as a structured starting point that requires expert verification, not a finished conclusion.

Winner: Slight edge to Claude on deep analysis, effectively tied for most everyday reasoning.

Research and Current Information: Gemini's Clear Advantage

This category is not close. Gemini is connected to Google Search and accesses current information as a standard feature. Ask it about something that happened last week and it knows. This is a structural advantage that ChatGPT and Claude simply don't have in their standard free tiers.

ChatGPT with browsing enabled closes the gap somewhat, but the Gemini integration feels more seamless — it doesn't have the awkward "let me search for that" step that ChatGPT's browsing mode sometimes exhibits.

One tool worth mentioning separately is Perplexity AI — not covered as the primary focus here but arguably the best purpose-built research tool available. For anything where you need cited, sourced, current information, Perplexity is worth adding to your toolkit alongside whichever primary assistant you use.

Claude's knowledge cuts off at its training date and it doesn't browse by default, making it the wrong choice for anything time-sensitive.

Winner: Gemini — and it's not especially close.

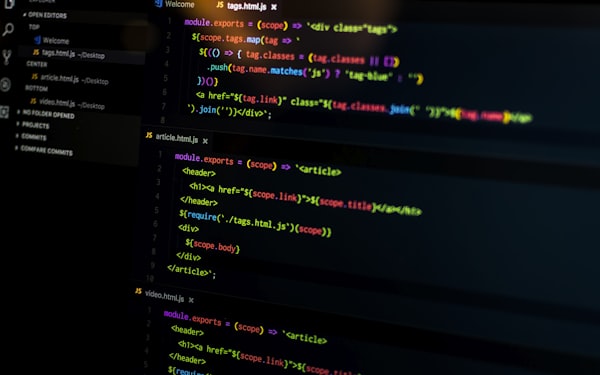

Coding: ChatGPT's Home Territory

Ask developers which AI they'd choose for coding and most will say ChatGPT — and they're right, though the gap is narrower than it used to be.

GPT-4o is fast, handles a wide range of languages confidently, is excellent at debugging (paste in an error and a code block and it almost always identifies the issue correctly), and has a large body of code-focused training data. The GitHub Copilot integration, which runs on GPT-4 class models, has become indispensable for many developers.

Claude is an excellent close second, and arguably better for specific tasks: understanding unfamiliar codebases, explaining complex code in plain English, catching subtle logic errors that aren't syntax errors. I've seen Claude identify architectural issues in code that GPT-4o missed.

Gemini is strong within the Google ecosystem — Android Studio integration, Google Cloud services, Python for data work. Outside that context, it's capable but not the first choice.

Winner: ChatGPT for general coding — though Claude is worth trying for code review and explanation tasks.

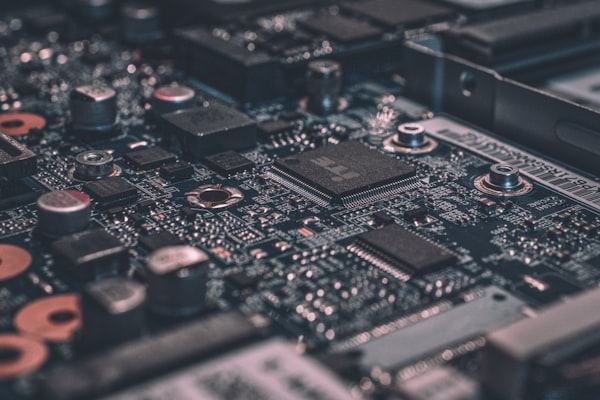

Context Window: Why This Matters More Than Most People Realise

The context window is the amount of information an AI can hold in memory at once — your prompt, the conversation history, any documents you've pasted in. Larger context windows mean you can work with longer documents, more complex problems, and longer conversations without the AI losing track of earlier material.

Claude has consistently pushed the frontier here. The current Claude models can handle extremely long documents — full research papers, entire codebases, lengthy contracts — in a single conversation. For power users who regularly work with long-form content or large documents, this is a genuine differentiator.

GPT-4o handles up to 128,000 tokens, which is plenty for most use cases. Gemini's context window is also large and competitive.

In practice, for everyday tasks, context window differences won't matter. But if you regularly work with documents longer than 10,000-15,000 words, Claude's advantage here is real and meaningful.

Winner: Claude — though the others are sufficient for most everyday use cases.

Speed and Reliability

ChatGPT and Gemini are generally faster than Claude at producing outputs. On short tasks, the difference is negligible. On long outputs — a 1,000-word article, a detailed analysis — Claude can take noticeably longer.

Reliability is another consideration. All three services have experienced outages and capacity issues during peak usage. ChatGPT in particular has a history of rate-limiting free-tier users during busy periods. All three have improved significantly on this front, but it's worth knowing that "the AI is slow today" is a real phenomenon that will occasionally frustrate you regardless of which you choose.

Winner: ChatGPT and Gemini on raw speed.

Personality and Ease of Use

This is subjective, but it matters for how much you enjoy using these tools day-to-day.

ChatGPT feels like the most polished consumer product — years of UX iteration have made it feel intuitive and friendly. It's the easiest to recommend to someone who has never used AI before.

Claude has a more distinctive personality. It's thoughtful, sometimes opinionated, and will push back when it disagrees with you or when it thinks you're asking the wrong question. Some people find this frustrating; I find it one of its most useful qualities. An AI that occasionally says "I think there's a better way to approach this" is more valuable than one that just does exactly what you said.

Gemini feels most like an upgraded search tool. That's not a criticism — for the tasks where you'd previously have used Google, it's a significantly better experience. But it doesn't have the conversational depth of the other two for complex collaborative work.

Which One Should You Start With?

If you're new to AI: Start with ChatGPT. It's the most polished experience, handles the widest range of everyday tasks well, and has the most documentation, tutorials, and community knowledge around it.

If you do a lot of writing or analysis: Add Claude. After a few weeks with ChatGPT, try Claude for your writing tasks. The quality difference on longer, more nuanced work is noticeable.

If you're embedded in Google's ecosystem: Use Gemini as your daily driver. The Search integration and Workspace connectivity are meaningful advantages if that's where your work happens.

The Real Answer

The best AI users in 2026 aren't loyal to one tool. They understand what each one is good at and switch based on the task. It takes about 10 seconds to have all three open in different tabs.

Use ChatGPT for quick tasks and coding. Use Claude when quality matters. Use Gemini when you need current information or are working in Google. That's it. That's the strategy.